New fund update; early thoughts on generative AI boom; the carbon zero movement

Happy New Year! Stay tuned on the opportunity fund that I mentioned in the last post - I’m looking into an exciting option to team up with some other GPs for a bigger fund. I’m also searching for a part-time intern - if you know any good candidates, please direct them to message me on LinkedIn.

I’m not writing 2023 predictions now, because I did it a year ago! I wouldn’t change many of these, other than the overly optimistic B2B credit exits, which will be delayed due to the macro economic climate. But it’s notable for what’s missing - the generative AI explosion.

There’s quite a bit of press claiming that OpenAI’s GPT is the first genuine threat to Google’s search supremacy, such as NYTimes’ “Code Red” article, and WaPo’s “Google Faces a Serious Threat from ChatGPT”. It’s mostly off base and illustrates how complex machine learning can be under the hood.

ChatGPT is useful for creative production of entertainment, and for summarizing, but not for providing accurate base knowledge at scale. I’m surprised there haven’t been many articles on ChatGPT with callbacks to Meta’s Galactica controversy. Galactica was an LLM that was only online for 3 days earlier this year before it was pulled. It was a science-focused model designed for complex queries with the express aim of giving factually accurate answers, and although it may have performed well internally on test data, when it got out into the wild, it returned a lot of incorrect information that, by virtue of the part of an LLM that knows how to statistically combine phrases in a way that sounds “smart”, was very persuasive even when it was wrong. Although it’s undeniably impressive that the style, grammar and vocabulary of a response is passing the Turing test, Meta immediately realized that this was also dangerous. ChatGPT suffers precisely the same defect, and people don’t realize when playing with a free beta that neither OpenAI nor Google can afford to field a chatbot as a business proposition (whether subscription or ad supported) that gives blatantly wrong answers to factual questions, in ways that the average user will not be able to detect. This is true even if right answers are a high percentage of results. Google’s PageRank and Google’s “Knowledge cards” that appear at the top of some results pages are very different technologies, and most of the media analysis glosses over the distinction between (i) routing you to the top 10 authoritative sites that may not have the info you were looking for (but likely have accurate and trusted info), in which case you’ll merely be annoyed, and (ii) providing you an answer directly from Google, where you are guaranteed to be unhappy if you rely on misinformation.

ChatGPT uses a snapshot of Internet information from 2021 as training data. Retraining an LLM costs far more than updating PageRank, and cannot be done effectively on a daily basis. The genius of PageRank is the way that matrix math can be used to rapidly update rankings of websites by crawling the web for new links based on the number and quality of other sites that link inbound to a site. The number of such links are extremely small relative to the total number of sites on the Internet (i.e. it’s a sparse matrix), and doesn’t depend on retraining model weights based on every word on the site (this is admittedly an over-simplification of the modern form of PageRank, but this isn’t meant to be an SEO tutorial). Perhaps some people may believe that as of 2021, most of the information they need to know is already on the Internet. But that’s not the reality of Google’s business - a very significant portion of searches, especially those that lead to AdWord clicks, relate to recent news, online transactions/commerce, or local information, all of which changes rapidly and is not useful if it is stale. Even “how to” educational information that people are receiving from ChatGPT, that doesn’t change often, is growing less valuable over time, because the younger generation is tilting towards wanting video information (hello YouTube and TikTok). To keep GPT-3, -4, and -N up to date, OpenAI will need to spend so much money that any service built on top of it will be much more expensive than Google search. Admittedly, there may be certain B2B verticals where it’s worth the price to the customer, but then we are no longer talking about a Google killer.

The third problem with the Google killer story is that it doesn’t fit the “innovator’s dilemma” pattern - a service that is much cheaper, but is “good enough” for users, even though the conventional wisdom is that the quality is too low relative to market standards. Even if people decide that the efficiency of ChatGPT makes up for some degree of embedded misinformation, at Google scales it is not going to be cheaper, which is why Google hadn’t deployed similar competing technologies that it already had developed in house before OpenAI made GPT available.

Now with that said, the type of machine learning model underpinning ChatGPT (transformers) is tremendously exciting for generating new images, video and other media for creative purposes. People are starting to use anthropomorphic terms like “hallucinating” or “dreaming”, to describe these outputs, and it doesn’t feel like hyperbole. So that’s fantastic, and has all sorts of intriguing business implications that I’ll be exploring in a future post.

The other topic on my mind recently is the news about “net” energy production from the laser fusion method. It is a big milestone, even with the acknowledgment that charging the lasers used far more energy and most experts think we’re decades away from commercializing clean fusion energy. But I’m not rushing to invest in fusion startups. There’s a lot of basic physics risk that’s difficult to assess, a ton of capex, and more importantly, I want to support energy startups that will have an outsize impact on carbon emission reduction.

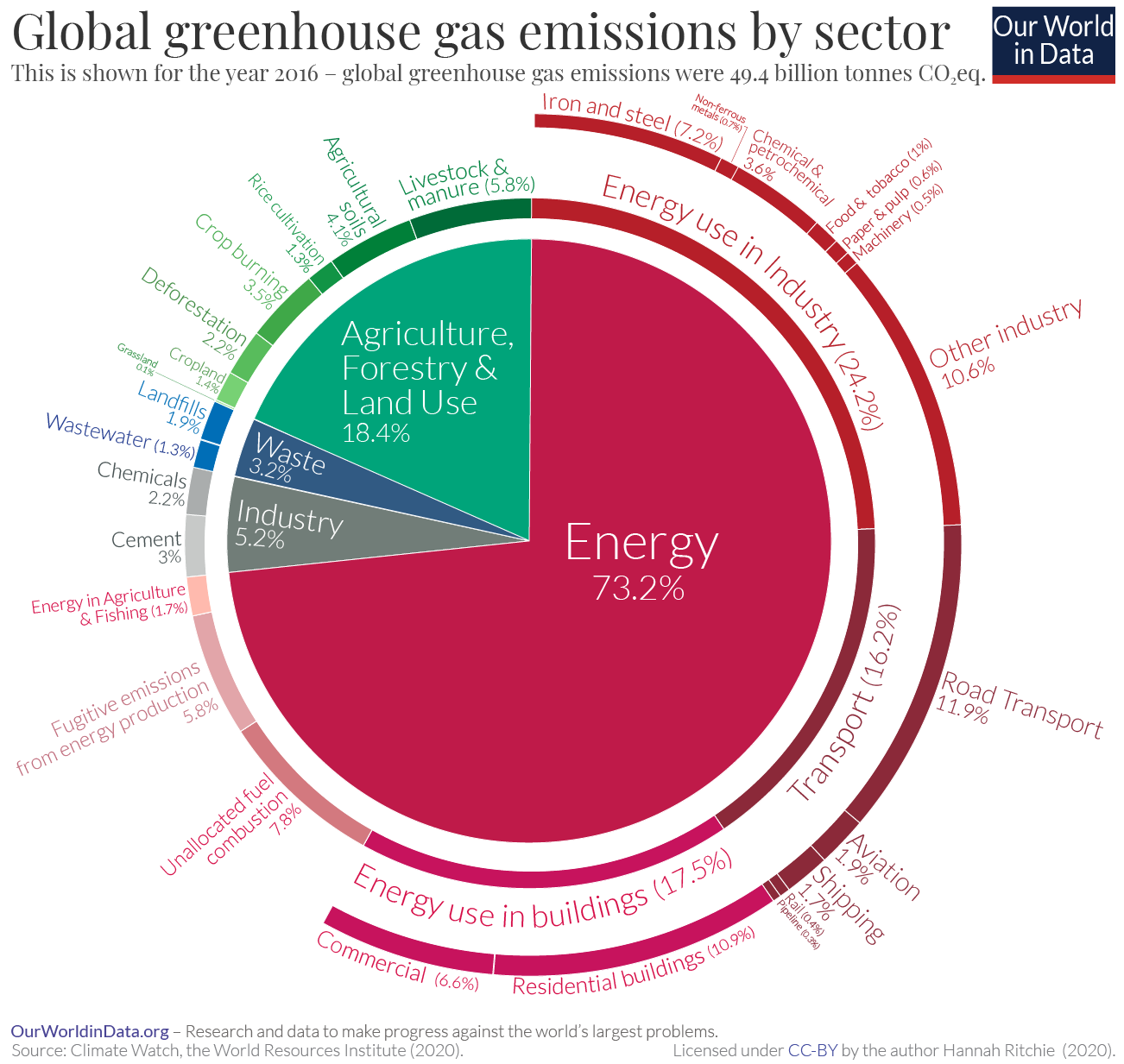

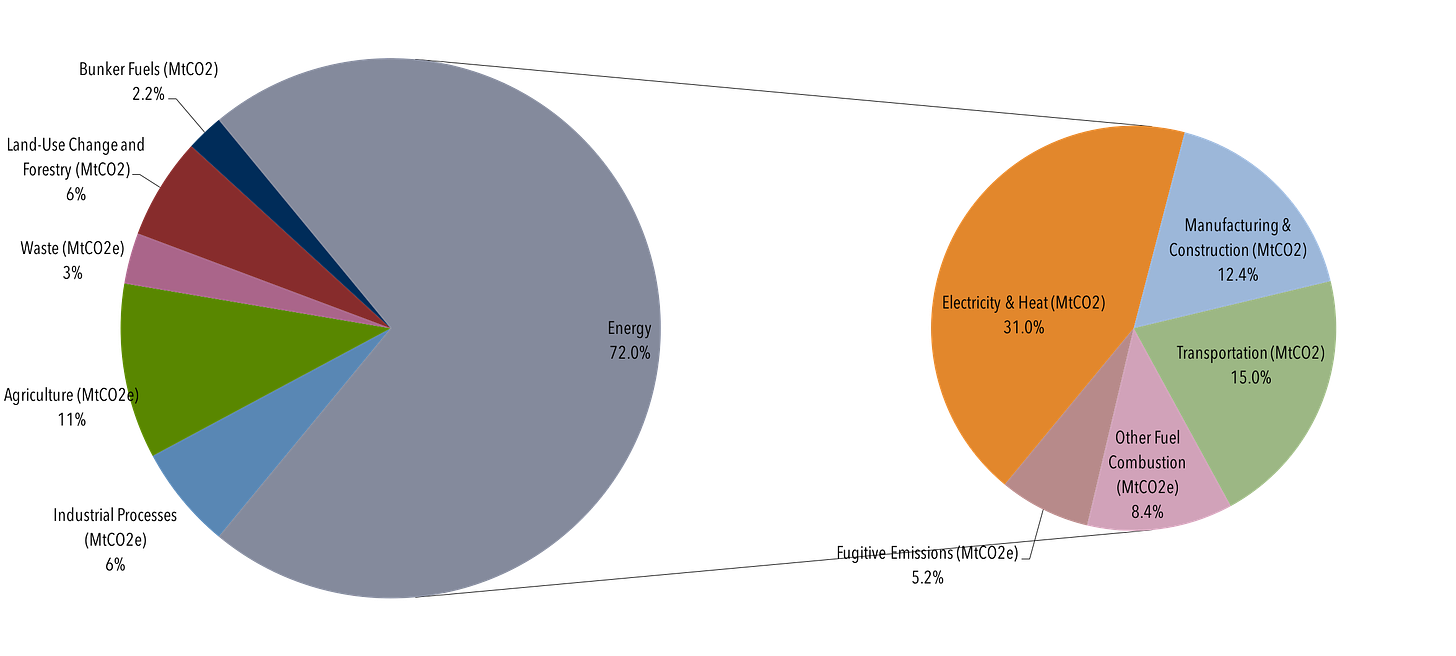

Based on my reading lately, including Vaclav Smil’s How The World Really Works and Energy & Civilization, I think the biggest carbon impact and business opportunities are no longer in moving off of fossil fuels for vehicles (e.g. electric vehicles), but in new ways of making the key materials that drive the highest carbon emissions: ammonia (critical input to fertilizer and therefore the world’s food supply); plastic; cement; and steel (the latter three making the largest contribution to sectors of manufacturing, construction and industry). Collectively, producing these materials drives anywhere from 1-2X the carbon emissions of transportation fuel, depending on the data source you consult.

These 4 sectors create emissions both as a direct output of the production process, and upstream because of the energy used in the production process. Many data sources do not combine these two factors to give a full carbon impact assessment for a particular sector, keeping “energy” separate, but Smil does.

Here’s one recent snapshot that puts transportation at 16% and the sum of the others roughly even.

And this data source below ends up closer to 2X (i.e. 30% is the sum of industrial processes, agriculture, and the energy usage from manufacturing/construction). Smil’s numbers are higher for cement and plastics than the World Resources Institute, and land somewhere in between the two.,

Source: Climate Analysis Indicators Tool

There are no good substitutes for these 4 pillars of society in growing economies, and most importantly, the growth rate of carbon emissions from them is faster (along with home heating and electrical usage) than the growth rate of carbon emissions from transportation fuel, especially in emerging economies (source: https://www.climatewatchdata.org/ghg-emissions).

I’ll be investing in a carbon-absorbing cement startup in Q1, Asymmetry has backed startups with platform technologies for making carbon neutral plastics, and I am interested to meet startups that (i) can increase the ratio of agricultural yield to fertilizer, or (ii) provide alternatives to steel.